Wednesday 31st July, 2013

Extreme Startup Game

Last week the four of us and some friends from MakieLab and beyond got together to play the Extreme Startup Game, hosted by Rob Chatley.

Split into pairs, we each had to write a bit of software that would respond to requests from Rob’s central server in the form of simple but increasingly esoteric questions our software had to answer.

Scoring

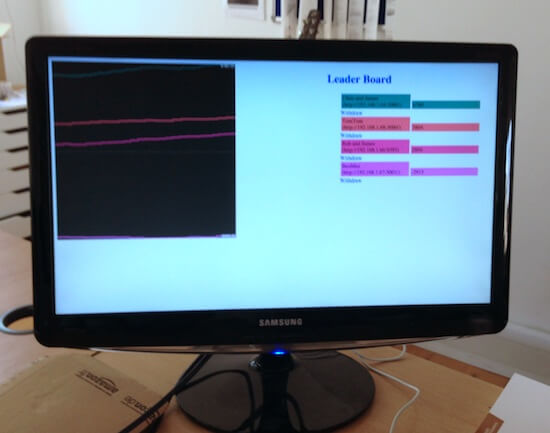

For each answer our software got right, our team was awarded some points, and the more esoteric the question, the more points it was worth. However, for each answer we got wrong, failed to attempt, or returned an error for, we were deducted points. And thus the game goes, with points visible on a leaderboard throughout.

Strategy

One team chose to use Sinatra to build their software, two teams chose to build their applications in Rails, and one used Python. But it seems likely that technology choice was an almost insignificant factor, because in any high level language it’s a matter of minutes to produce a fairly comprehensive base web application. Instead, more significant was each teams ability to monitor their score and recover from penalisation. As James M says:

Our main problem was that early on we didn’t keep an eye on how we were doing so we were penalised quite a bit and never really recovered…

… another useful thing we did was to print out clear details about the failed request, but I wish we’d gone further with this - perhaps automatically retrieving the score from the server for that failed request.

Tom has similar thoughts:

I think one downside of our approach was that it never felt we had time to get any sort of product strategy together - which requests to concentrate on, etc. We also could definitely have made it further if we’d skipped testing some of the simplest answers…

Speaking for Chris and I, we definitely made a conscious effort to keep looking at our score and handling the most significant penalties first, and I think that was probably what largely contributed to our ultimate victory.

Testing

But what about the relative merits of our implementations? Most of the players would identify relatively strongly with the practice of Test Driven Development, but given the short timescales and nature of the game, did anyone actually do that? And if so, did it help?

It seems like Team Tom (our Tom and Tom Stuart) did do some TDD:

Tom and I wrote tests before each request we handled, which helped give us the confidence to change our nice set of Regexes to the nasty and distasteful eval hack.

More about that ‘eval hack’ later, but James M – author of Mocha, if you can believe it – has a confession to make:

Rob & I did not write automated tests … We thought about refactoring, but did very little because we were worried about breaking stuff

Shocking ;-)

Chris and I didn’t write a single test until quite late in the game, when we wanted to perform a bit of refactoring, and even then the test was generated by copying and pasting a manual list of examples that we’d kept as the game played out. Writing the test did give us a lot of confidence about “deploying” our refactoring, since as other teams noticed, screwing up your application incurred quite a penalty. James M again:

One useful thing we did was to use shotgun with Sinatra so that we had instant deployment, although we did have to be careful not to hit “save” halfway through an edit.

Code quality

So, no tests and hardly any refactoring in any of the teams. What about code quality? Well, I can tell you that every single team had a single controller with a giant case statement in it, so the code is about as smelly as it gets. Quoting James M, but effectively pretty much everyone:

We had a single “case” statement with pretty strict regexps which we just gradually added more “when” handlers to.

Not only that, but every team’s implementation for handling a certain subset of questions does some trivial input manipulate and then calls eval on it! If Rob’s server was feeling mischievous it could easily have sent a question along the lines of rm -fr / and all of our pretend startups would’ve failed in a blaze of glory.

Winning the game

But security issues aside, is the fact that we abandoned our long-practiced development skills actually a bad thing? In this case, the game isn’t about being the best software developer, or producing maintainable code. Instead, it’s deliberately designed to force us to consider other priorities, and balance the “business” needs and demands of the market against our desire to refactor and test and write beautiful little classes.

Naturally, the scenario is a bit contrived, but we certainly found it a fascinating and refreshing interlude to our normal set of practices. I think it would be very interesting to try and play a version of the game out over a longer period of time, to see at what point – if any! – tests and refactoring start to pay dividends within the overall strategy.

If you get the chance to have Rob run the Extreme Startup game for your team, we can highly recommend it as a fun and insightful afternoon. In fact, why not start playing right now: which of the following numbers is both a square and a cube: 330, 9, 64, 46656?

– James

If you have any feedback on this article, please get in touch!

Historical comments can be found here.